Photo by Igor Bumba on Unsplash

AI in data engineering - Part 1

Building custom AI tools for data engineering purposes

Preface 🎬

I try to avoid engaging in buzzwords and hype content, but I have to admit, my interest in the applications of AI in data engineering has been sparked recently.

So I seek to share my excitement with you by force. Consider these as my notes in introducing how we could create purpose-built AI tools that could at the very least address a few of the common challenges faced by data engineers today.

Although the article is addressing data engineering use cases, some of the ideas explored here can certainly extend into other domains to reap efficiency gains, financial increases and other benefits valuable in these fields.

Challenges in data engineering⚠️

It is important to contextualize some of the common issues data engineers face so we can understand why and how the AI tools can provide the value promised.

Let’s explore:

Data Quality📊

In simple terms, data quality is a measure of how accurate, clean and complete data is. The data our pipelines generate should be error-free and accurately mirror real-world events.

Reliable data is vital for making decisions, whereas poor data quality could mislead company initiatives and dent the credibility of the data engineering teams responsible for it.

Some of the challenges for data engineers in data quality include:

Complex transformations 🔄- Converting raw data into a useable format involves complex cleaning and manipulation processes that could lead to errors and oversights impacting data quality

Designing data validations 🧪- Confirming that the data reflect real-world events requires thoughtful testing approaches and a significant investment of time from engineers who must delve into the details. If the validations are poorly designed, they could fail to catch inaccuracies, therefore degrading the data quality.

Missing or incomplete data ❌- Having data missing or incomplete could pose a risk to data integrity. Having a process to handle this effectively and making decisions about whether to fill in missing values or drop columns that contain them is a challenge for the engineers. If missing data is not managed properly, the analytics reporting would have gaps and inaccuracies that would stain the decision-making process.

Data Governance🏛️🔒

This involves all the processes, policies and practices of a company to make sure data is available, useable and secure for business operations.

A good data governance framework emphasizes

fully utilizing data resources

strengthening security protocols in a safe and undisruptive manner

providing the right teams and users with the data assets they need to perform their jobs

staying on top of the latest industry regulations and integrating them across the organisation without hindering engineering and innovation efforts

Some of the challenges for data engineers in data governance include:

Balancing regulatory compliance ⚖️- Keeping updated with the latest data usage and privacy rules while smoothly integrating them across the company is complex and demanding. Poor compliance that leads to a breach of privacy laws could lead to severe penalties and reputational damage to the company — ask Cambridge Analytica.

Secure access management 🔑- Deciding on who should have access to what and then implementing these access permissions securely is a challenging endeavour, especially when a business expands its teams and data resources. Unauthorized access or hacks can result in data leaks.

Effective data stewardship 📝- Data needs to be managed, categorized and documented properly, however many engineering teams can agree this is easier said than done. Poor data stewardship can add to the inaccuracies in reporting and misinformed decision-making.

Data Orchestration🎛️🌐

Data orchestration involves coordinating data workflows and their operations across different services. This could involve managing workflows that integrate external applications with multiple databases, and scheduling periodic ingestion jobs from the cloud into the main data platform, among others.

Some of the challenges for data engineers in data orchestration include:

Complex workflows 🕸️- Managing workflows connected to several data sources requires data engineers to monitor changes made to the sources because they could impact how downstream pipelines and processes operate. If the protocols for managing these changes are poor, the data pipelines that depend on them will fail to deliver the expected data and cause delays in troubleshooting them.

Automation setup 🔄- Having automated tools and processes makes any company (and C-suite team) look cool to their peers, but setting up effective automation strategies that handle repetitive or complex tasks is challenging, especially in environments where data workflows are always evolving. If this isn’t managed correctly, it can lead to creating inefficiencies and issues instead of solving them i.e. the automations introduce more technical debt than reducing them.

Error management🔍 — A good data workflow should include a mechanism for handling failures so that downstream processes are not interrupted, but designing these mechanisms is not always an easy feat. However, if the error handling mechanisms are not in place, issues could impact business operations.

Imagine a data engineer running a few DAG operations in Airflow that handles the data flows across a single PostgreSQL database, a Python ETL pipeline and a Tableau dashboard. The business expands rapidly, and so does the data with it. Now more databases in SQL Server are created to accommodate the increase in structured data coming in, and the new forms of unstructured data (videos, images and audio) is stored in AWS S3 buckets. But now this means setting up new DAGs and Python operators to manage each new ETL pipeline being set up. More DAGs and data sources are added monthly, and becoming more complicated to manage internally. Unaccounted changes to the data sources and other overlooked errors begin to create unintended consequences to business operations.

Data Observability👁️🖥️

Data observability involves having a holistic view of every aspect of the data lifecycle. In other words, this means you have a transparent view over every stage involved in getting data from its sources to its target location at a high level, including where the data originates from, how it’s manipulated and where it’s stored.

Some of the challenges for data engineers in data observability include:

Sustained monitoring 👨💻 — In spaces like cyber-security, it’s vital to maintain continuous oversight of data health and virtual activities with real-time monitoring tools, otherwise cybercriminals can use malware and other harmful techniques to harm the company’s IT infrastructure

Tracking data lineage 👁️- Engineers can find out why certain columns are not appearing in a target table using an observability tool to track where the columns may have been dropped at previous stages, but this visual tracking mechanism becomes harder to use when a simple data ecosystem inevitably grows into a complex one. This could slow down the efforts in analysing the root cause of issues and troubleshooting accordingly.

Detecting and resolving errors ⚙️— Some issues pointed out from an observability tool may not require domain knowledge to tackle them, while others may demand a more comprehensive understanding of certain situations and expected behaviours — how would engineers handle issues or incorrect outputs that go undetected by the tool and are pointed out by the end user?

Data Transformation⚙️

Data transformation is turning raw data into a clean and usable format. This involves manipulating, normalizing, and integrating data to make this possible. Most time spent by data engineering teams is within this activity. But why?

Some of the issues data engineers encounter during data transformations include:

Planning data transformations🗃️ — Depending on the size of the data pipeline, transformation approaches can take a significant amount of time to plan and convert into executable logic via code or low/no-code solutions. Poor transformation planning could lead to introducing unintended bugs and errors into the transformation layer, which adds more time to the debugging process.

Diverse data sources 🌐- With data originating from different data sources, each may have unique formats and structures, which would require a conversion job to transform them all into a standardized format, which can be time-consuming for the data engineers. For instance, a marketing campaign may source data from different channels like email, social media and direct paper mail, and each would require different transformation logic to standardize. Without a clear approach to this, standardizing data could lead to unnecessary complexities and delays in development.

Lengthy debugging ⌛🐛- How many times has a data engineer spent hours debugging transformation logic on a critical reporting pipeline, get their changes peer-reviewed, only to have the stakeholder pointing out anomalies or errors caused by a bug that went undetected?

Code smells👃- For data platforms with coded transformation layers, supporting the efforts in increasing the code quality by refactoring the source code to make it adhere to the programming language’s best practices can be difficult, especially when each engineer has their coding styles and other competing priorities across the team requires their attention.

Let’s imagine a group of data engineers that also work for a healthcare start-up, and are tasked with integrating patient data from different data sources, like EMRs, smartwatches and hospital databases stored as Delta Lake tables in Azure ADLS containers for their predictive analytics. Instead of designing an ideal approach to standardizing the data coming from these different sources, they go straight into coding the Python scripts to build the platform. With each engineer coming in with their unique coding style, it’s difficult to understand what the code does, especially when each engineer is not 24/7 available to query with. This rush leads to bugs and errors popping up on the platform that harm the start-up’s analytics efforts.

Data Modelling📈

Data modelling is identifying and establishing relationships between objects belonging to different tables. In simple terms, it involves building a blueprint or model that demonstrates how data is organised and stored in a database or data warehouse. Data models are required to understand how data tables within a data store are connected to create powerful analytics tools for different downstream teams and users.

Some of the challenges for data engineers in data modelling include:

Managing complex relationships 👯- As the number of tables and their corresponding relationships increase in a data store, tracking and correctly representing their connections becomes progressively more challenging.

Methodology adherences 📚- To avoid accruing technical debt when modelling data, engineers usually adopt a methodology like Kimball and Inmon and follow the principles each adheres to. Failure to adhere to the methodology of the principles engineering teams may adhere to means they may not gain the full data quality benefits promised by them, and therefore creating ineffective (and inaccurate) data models

Data requirements📃 — Gathering the data requirements from the targeted users takes time, and more times than not, the stakeholders only have a generic idea of the data they wish to see, which means key data personnel need to manage the requirements gathering lifecycle, from the specific reports the business needs to the granularity of the date fields, and also the considerations around the SCDs (slowly changing dimensions).

Keep models up-to-date 🗓️- Businesses evolve, and so must their data models. There may be a request for more granular reports that represent the new expectations from the business, which means a re-examination and update on the relationships between each table, therefore adding to the complexity of this process. Working with outdated data models means the reports and solutions depending on them are providing low-quality and inaccurate information.

As an example, imagine a data engineer working for an established retail firm, and is responsible for developing a data model connecting the customers, sales and product tables to support their marketing campaigns. There are plenty of tables to perform this one, so they need to think clearly about their approach here. If the engineer fails to gather the precise requirements from the end users, select the right methodology that suits these unique requirements, and keep the models up to date, the marketing campaign may design strategies and make decisions that lead the initiative to a disaster.

Data Storage☁️💾

Data storage is archiving data in secure and easy-to-access locations. This could include local storage on laptops, mobile devices and desktop computers, or a larger scale like cloud storage options including data lakes, data lakehouses and data centres.

Some of the challenges involved with this include:

Slower file retrievals 🚄- As the quantity of enterprise data increases over time, it also becomes progressively difficult to find specific files and their locations, especially if the folders and files are not organised efficiently. This results in more time wasted on finding data that could be invested in more valuable activities.

Difficult folder formation 🗃️- It’s not easy coming up with an effective way to organize data, especially an optimal folder structure that’s easy to manage and scale over time. It requires careful consideration of the business and project context as well as the existing architecture

Cost and performance hits 💸- Every file stored comes at a performance and financial cost, so engineers would require better methods in delivering live cost usage information easily accessible and user-friendly to support the efforts in optimizing storage costs.

A small company starts to store an increasing number of video, audio and text files in Azure Blob containers after outgrowing their local storage. However, there isn’t a consistent naming convention provided for files and folders, which means a significant amount of time is spent manually searching through hundreds of containers and thousands of files each time any member of staff needs to retrieve data for different purposes.

Data Testing🧪

Testing data is an important step in data engineering for ensuring the integrity and accuracy of the data processed. It involves running validation checks on different components of your data pipeline to confirm each stage is yielding the desired results and operating as expected.

However, it’s not free of challenges, let’s explore:

Designing a testing framework 🎨- Developing ideal testing approaches to confirm each data pipeline component is checked and functioning as expected can be mentally taxing, but without one engineers can’t confidently roll out pipelines that are behaving as expected at every stage and/or inaccurate data transferred across the business leading to the wrong business decisions

Delivering test results to stakeholders📩 — Conducting the tests and sending the results to the relevant stakeholders frequently can be time-consuming and cumbersome over time, especially if stakeholders prefer non-standard reporting formats that static email reports may not be able to answer. If stakeholders can’t access test results in a format they can understand, it means they are unable to have an opportunity in gaining confidence in the test frameworks developed

An accounting firm responsible for auditing financial statements relies on supporting documents, like invoices, emails and receipts. The data engineering team are required to build the data pipelines connecting their client’s data warehouses to the firm’s reporting tool to understand their revenue, expenses and other low-level metrics. A testing framework in PyTest needs to be adopted but requires brainstorming sessions to iron out the important factors to make this a success, the output formats of the test results and the teams + users to deliver them to. Without this, inaccurate audit reports would be created and sent to the wrong end users, causing potential legal issues and damages to the firm’s reputation.

Data Infrastructure🏢

This consideration deals with carefully designing, deploying and maintaining a robust system that transports data from the sources to target locations.

Some of the challenges involved with this include:

Scaling 📈- as a company expands, its infrastructure must also evolve to handle growing requirements and complexities — figuring out and justifying the design decisions that optimize the current data estate. Failure to scale a data infrastructure can result in a company’s data platforms experiencing performance issues or even collapsing when there’s a sudden surge in online traffic or user activity

Continuous maintenance 🌀- Each infrastructure will require continuous updates and patches to keep the business running smoothly, but if maintenance is not planned adequately, these updates could be draining the company’s time and resources.

Determining the right tools ⚒️- New tools in the data engineering space are frequently rolled out, with each boasting different unique capabilities…how do companies find the time and/or leverage the expertise to determine the right tech stack that suits their unique requirements?

A multinational e-commerce firm has an online platform with an ever-increasing user base growing over time. The corresponding user activity data also grows with the user base. At a small scale, the data estate was coded into a few Terraform scripts that would daily spin up a few AWS resources once certain environmental conditions are satisfied. This time, the new challenge is making decisions on how to effectively scale your systems, while figuring out how to balance between cost, performance and future-proofing the current data estate.

Data Acquisition 📡

This involves collecting data from different data sources. This activity can fulfil the current needs of the business or be reserved for future use. For instance, data can be acquired by a company to study customer behaviour on a range of their products on their app.

There are challenges associated with collecting data that include:

Numerous data sources 🗃️- Pulling data from several different sources can easily get complicated especially when each could contain its unique formats and structures

Privacy concerns 🔐- Laws and regulations around privacy are becoming stricter, and rightly so. The main challenge for companies is figuring out how to collect and handle data in a way that respects user privacy

Continuous acquisition🔁 — Creating and managing streaming engines that continuously ingest data can be resource-intensive and hard to manage constantly. This requires additional efforts in maintaining a smooth, uninterrupted flow of data into the ideal locations.

A data engineer writes several custom Python modules that use Selenium to continuously scrape customer sentiment data across different social media platforms, dumps them into S3 buckets and ingests them into a data platform. Then there’s a sudden surge in user activity, causing the reporting tools to crash due to inadequate resources, and now the data engineer is struggling to gather resources to restore the service to get business-as-usual up and running again.

ChatGPT🗣💬🤖

ChatGPT is the AI chatbot created by OpenAI that was made publicly available in November 2022. Users of ChatGPT can conversate with its large language models (GPT-3.5 & 4 as of this writing) in a human-like manner to gain insights into many topics you would find on the internet (up to September 2021).

Photo by Mojahid Mottakin on Unsplash

Strengths of ChatGPT💪

ChatGPT has excelled in many areas, including:

Dynamic conversations🗣️ — ChatGPT can answer questions and handles queries in a human-like manner

Generative text engine📝- It excels at crafting text in the form of emails, literature and even programming scripts

Depth of knowledge 📚🌐- It provides comprehensive insights into a broad range of subjects by drawing from the extensive data from the internet it’s trained on

Role-playing🎭 — ChatGPT can channel its extensive knowledge by acting out different roles it’s given by the user like a tutor, executive, professor etc

Limitations of ChatGPT⚠️

However, there are areas where the publicly available ChatGPT falls short:

Learning curve 📈😓- Engineering quality prompts that generate valuable outputs from ChatGPT is almost a skill in itself that could demotivate prospects to using the tool

Feature accessibility🔐💵 — Exciting features like ChatGPT Plugins and Code Interpreter are only accessible to paid subscribers

Limited commercial use 💼- The chatbot is too generic for business use cases that require specialized and niche domain knowledge

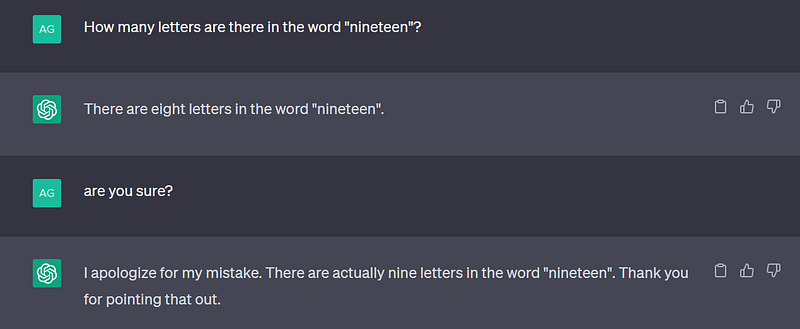

Hallucinations👻 — ChatGPT is infamous for providing factually incorrect answers confidently when the right answers are outside of its training data

Privacy concerns 🔐- OpenAI are not currently transparent with how they are using the data collected to develop their AI products, causing companies to hesitate to share their sensitive data with them through their tools

But we are now witnessing the rise of many AI language models and chatbots including Google Bard, Claude, Falcon LLM, Dolly 2.0 etc, that claim to offer unique capabilities that are absent in the publicly available ChatGPT. But is this enough?

Why and when you should build your own AI tools🤔💭

So now that we’ve explored these issues, let’s now move into some of the reasons we would want to craft our own AI tools under these circumstances:

Customized requirements🎯 — To create in-house tools that are tailored precisely to your unique operational context, needs and tasks

Advanced capabilities 🚀- Instead of being restricted to a few capabilities, why not create a tool that includes all the advanced capabilities ChatGPT would have any of the additional costs or subscription tiers included?

Increasing accuracy 📊- Developing tools that allow us to control the accuracy and quality of the responses provided can allow us to refine them to more commercial use cases

Governance over sensitive data 🏢- This route will enhance security over PII and other sensitive data because there is complete transparency within the business over how the data is used, processed and maintained over time, reducing the legal and reputational risks that would have been caused if other alternatives were taken.

Benefits of custom AI tools in data engineering✅

Imagine AI chatbots that are plugged into your databases, ETL pipelines, data platforms and orchestration job. What benefits can you expect from chatbots like these?

Let’s explore a few:

Saves time ⏳- Less time spent on troubleshooting means more time can be channelled to more creative activities that accelerate value across your business operations and customer experience

Reduces manual efforts 👨💻- Less physical effort in performing certain tasks frees up more energy to be channelled into other productive activities

Reduces cognitive efforts 🧠 — Leaving AI to perform the mental heavy lifting for tasks that require vast amounts of mental energy can preserve cognition and creativity in other areas requiring it

Saves money 💰- From a business perspective, spending less money on human labour could free up capital to invest in other business-critical functions and initiatives

Use cases of AI tools🧰

Here are some of the capabilities of AI chatbots built using custom tools:

- To convert text to software💬📲

- create business + productivity tools, web apps, debugging + auditing platforms, visualization dashboards, flowcharts + diagrams etc

2. To generate/return text based on text provided📝💡

enhance decision making

spark brain-storming sessions and ideas

solving problems and walk you through its thought process

create drafts, wireframes, plans, campaigns, strategies, and roadmaps

reads documentation, research papers, reports,

writes summaries, emails, articles, legal documents, blogs, policies, templates, boilerplate code

3. To trigger automated workflows⚙️🔄

Adhoc or periodic ETL pipelines

Data entry jobs

Data orchestration jobs

Sending reports, notifications, emails

workflows that generate structured AND unstructured data

a. structured data = tables organised into rows and columns

b. unstructured data = text, images, videos and audio by feeding text into other generative AI technologies and APIs

Photo by Glenn Carstens-Peters on Unsplash

Does every data engineering team need custom AI tools?🤔💭

If any team has any process that is manual, repetitive and time-consuming, AI can potentially this from a painful to a painless business process (or at least a less painful one), in one way or another. Any process within this scope could be managed by an AI tool. However, whether every team should start making custom AI tools is another question entirely. There has to be a compelling justification for creating these tools because this would require talent and industry-specific knowledge, which are currently scarce and expensive in the data engineering space.

There also has to be an environment within each team and company that is conducive to it too. The benefits AI promises do sound appealing, but only if the engineering teams have an environment that is conducive to it. The company’s ecosystem needs to be able to adopt, maintain and develop AI tools in a scalable manner. Although the capabilities of different AI tools are appealing, their benefits will only materialize if the environment is conducive to it for the long term, otherwise, they will do the opposite and accrue technical debt across the data estate.

This ecosystem is a by-product of a robust AI and data strategy that is in harmony with the business objectives. But what would an ecosystem that supports AI tools like like? This would include (and certainly not limited to):

Innovative culture 🚀- an environment that encourages trial and errors by giving engineers the freedom to experiment and fail without consequences

Good data governance framework 📚- systems that allow engineers to access, store and process the data required for the AI and data experiments with ease

Leadership support 🤝- active involvement from leadership to ensure the ecosystem contains the resources required for AI tool development and implementation while trusting the developers’ capabilities

Right infrastructure 💻- ensuring the computing resources and assets to accommodate these AI tools are present for smooth operations

Collaboration 🫂- having the tools available for developers to work together on projects and share knowledge easily

Photo by Andrew Neel on Unsplash

How to prepare your team for AI tooling🫡

- Highlight your business processes 📝- make a comprehensive list of your business processes either on paper or in a database

2. Evaluate each process ⚖️- Assign scores or grades to each process based on:

Severity level — How high are the stakes of this process for the business?

Priority level — How much of a priority is this process to the business objective?

3. Highlight their problems 💢- Identity which processes contain any of the following challenges:

Manual — how much human labour is invested into this process?

Repetitive — how often is this process conducted?

Time-consuming — how much time does it take for this process to be completed, implemented and/or managed?

Expensive — how much money does it cost to conduct this business process?

Error-prone — how often do errors occur with this process?

Trivial — how boring or tedious is this business process for you or your team member(s)?

4. Research the tools available 🔍- Investigate the tools that are already addressing these pain-points

5. Develop a prototype 🤕- Use these tools to create a proof-of-concept product at a small scale in a controlled test environment

6. Demo the prototype 🎥- Present the prototype to the relevant stakeholders.

The presentation should be centered around how adopting this tool can benefit the business by solving the problems your team experiences.

Highlight how addressing these pain points directly ties to the business’s expected ROI if adopted only if it actually does

7. Develop a roadmap 🗺️-

If the demo is successful and the decision-makers approve implementation, create a roadmap to safely and securely roll out into production.

If the prototype isn’t approved, understand the reasons why and work towards alternative solutions that still address these pain points and offer the highest ROI in line with the business objectives

Conclusion🏁

We have touched on some of the problems data engineers face in their routine but necessary activities and included some of the ways AI tools can support our efforts in alleviating the pain points listed too.

In part 2, I’ll walk you through some AI solutions and a corresponding boilerplate architecture you can use to kick-start your development of these AI tools. Look out for more technical articles on this series, I can assure you it’ll be worth it!🔥

Feel free to reach out via my handles and share your thoughts, questions and queries and I’ll be happy to assist where I can: LinkedIn| Email | Twitter | Tiktok